The European Commission has opened a consultation on draft guidelines developed by the high-level expert group on Artificial Intelligence (AI) which also draw attention to a number of “critical concerns”.

The document was put together by 52 experts from business, academia, and civil society who noted a number of “critical concerns” for the future of AI, drawing attention to issues related to areas such as identification, citizen scoring, and killer robots.

AI must have an “ethical purpose” and be “human-centric”. AI should comply with “fundamental rights, principles and values”, including respecting human dignity, people’s right to make decisions for themselves, and of equal treatment, according to the draft guidelines.

The document states AI should conform to five principles: beneficence, non-maleficence, autonomy, justice and explicability.

As AI grows in popularity and scope of application, it has become increasingly necessary to ensure that the maximum benefit of AI is attained, while minimising risks.

The first of these concerns relates to ‘mass citizen scoring’ by public authorities without prior consent. This ‘citizen scoring’ would seek to assess individuals on their “moral personality” or “ethical integrity”, which impinges on fundamental rights when carried out “on a large scale by public authorities.”

The issue of ‘covert artificial intelligence’ is also raised as the EU attempts to ensure that individuals are informed when they are interacting with a machine – this is a concern as androids become more ‘human-like’.

Recently, ‘Sophia’ was the centre of attention in Malta to promote the launch of a ‘citizenship test’ during the Blockchain Summit. Soon after, ‘Sophia’ was at the annual Global Residence and Citizenship Conference by Henley & Partners in Dubai to launch Moldova’s new cash for passport scheme.

“It should be borne in mind that the confusion between humans and machines has multiple consequences such as attachment, influence, or reduction of the value of being human,” the report states.

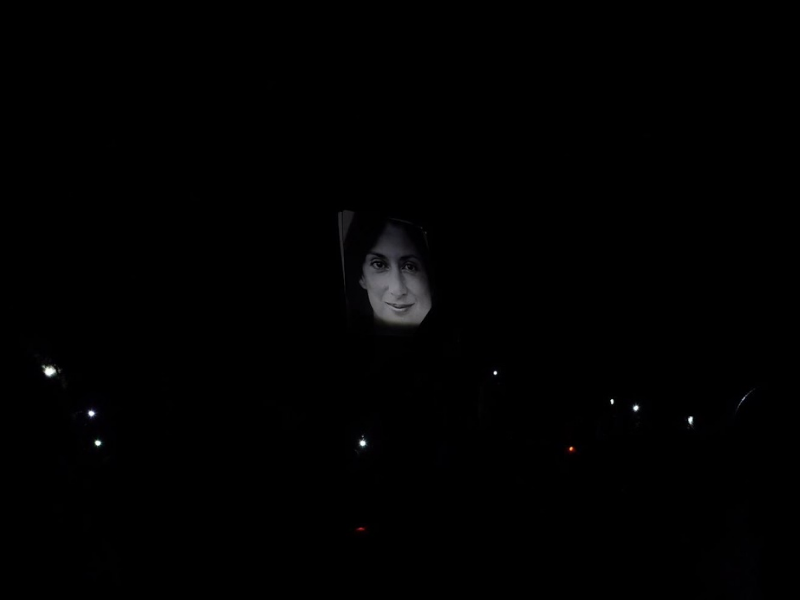

Another area of concern raised in the report is the use of AI in identification technologies, such as facial-recognition software – an idea Prime Minister Joseph Muscat raised. The ethical concern centres around the lack of consent given by the subjects.

‘Lethal Autonomous Weapons Systems,’ otherwise known as “killer robots,” are also highlighted in the report. In September, the European Parliament adopted a resolution that called for an international ban on such forms of AI, noting that machines were unable to make human decisions and that humans alone should be accountable for decisions taken during war.

The document comes not long after Malta announced its intention to formulate a National AI Strategy. Concerns on Malta’s AI Strategy in an open letter to Schembri saying there were “some troubling initial decisions”.

The consultation process is open until 18 January. In March 2019, final guidelines are expected to be handed to the Commission.